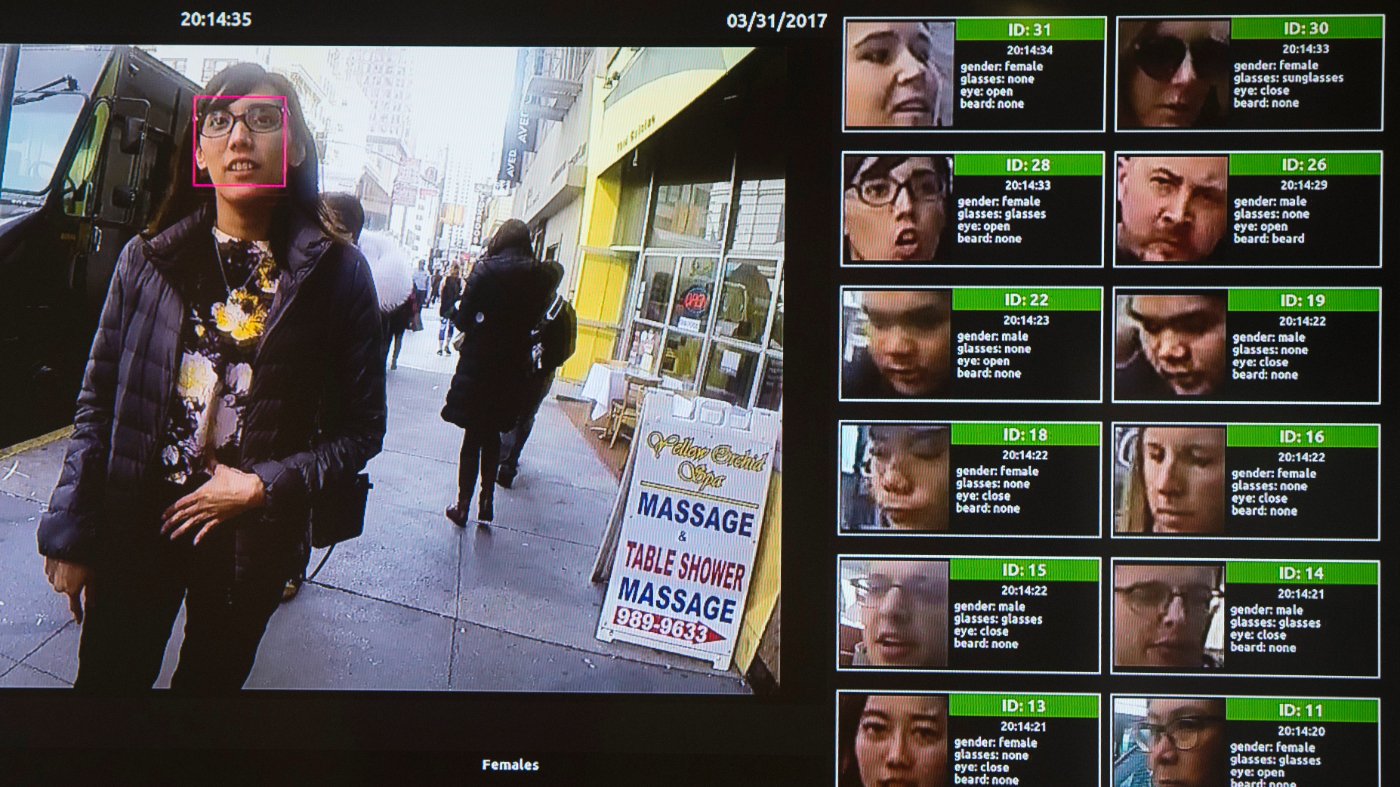

Angela Lipps, a Tennessee grandmother, spent more than five months behind bars after a police department relied on AI-powered facial recognition to arrest her for bank fraud she did not commit. U.S. Marshals, guns drawn, seized her from her home last July while she was babysitting four children. The charge: eight felony counts of bank fraud. The sole evidence: an AI match between her face and surveillance footage from a bank in Fargo, North Dakota—a city she had never visited.

The Fargo Police Department never contacted Lipps before filing charges. They did not verify her travel history, nor did they check her bank records, which would have shown her depositing Social Security checks in Tennessee at the same time the fraud occurred 1,200 miles away. Instead, a detective ran her image through a facial recognition database and concluded she was the suspect based on “facial features, body type and hairstyle and color.”

Lipps spent 108 days in a Tennessee jail without bail. North Dakota officers did not transport her to Fargo until late October, and they did not interview her until mid-December—more than five months after her arrest. When they finally reviewed her records, the case collapsed. Charges were dismissed on Christmas Eve. By then, she had lost her home, her car, and her dog. Local defense attorneys and a nonprofit pooled funds for a hotel stay that night, then drove her home on Christmas Day.

What makes this case particularly egregious is that the West Fargo Police Department, investigating similar bank fraud, used the same AI software and got the same hit on Lipps but declined to press charges. They concluded that an AI match alone did not meet the Fourth Amendment’s probable cause standard. Two departments, same technology, same result—but one acted responsibly, the other recklessly.

Lipps is at least the eighth documented wrongful arrest in the U.S. stemming from AI facial recognition. A Washington Post investigation found that police failed to verify alibis in three-quarters of those cases. A National Institute of Standards and Technology study showed the technology misidentifies Black and Asian faces at 10 to 100 times the rate of white faces, but Lipps is white—underscoring that the problem is not limited to any one demographic. The technology is simply inaccurate and should not be the basis for depriving Americans of their freedom.

Congress has been slow to act, leaving states to fill the void. Fifteen states have some form of facial recognition legislation, but most laws are weak. Stronger standards should mandate corroboration of algorithmic identifications, regular accuracy audits, and accountability when departments fail to do basic investigative work. As Tyler Deaton, founder of the American Security Fund, wrote, “Technology is a tool, and facial recognition does not solve a crime by itself. It gives a detective a lead, one that still requires verification, corroboration, and the kind of rudimentary follow-through that has been the baseline of police work for a century.”

Lipps is pursuing a civil lawsuit, but lawsuits address what already went wrong. The broader issue remains: a single phone call could have kept her home with her grandchildren. Without standardized protocols—like those being debated around DHS funding and technology oversight—such failures will continue. As Deaton noted, “Two departments in the same state should not be able to use the same technology in two completely different ways—one responsibly and one recklessly.”

Americans deserve better than a system where a machine’s “maybe” is treated as probable cause. The lesson from Lipps’s ordeal is stark: AI is not a substitute for police work.