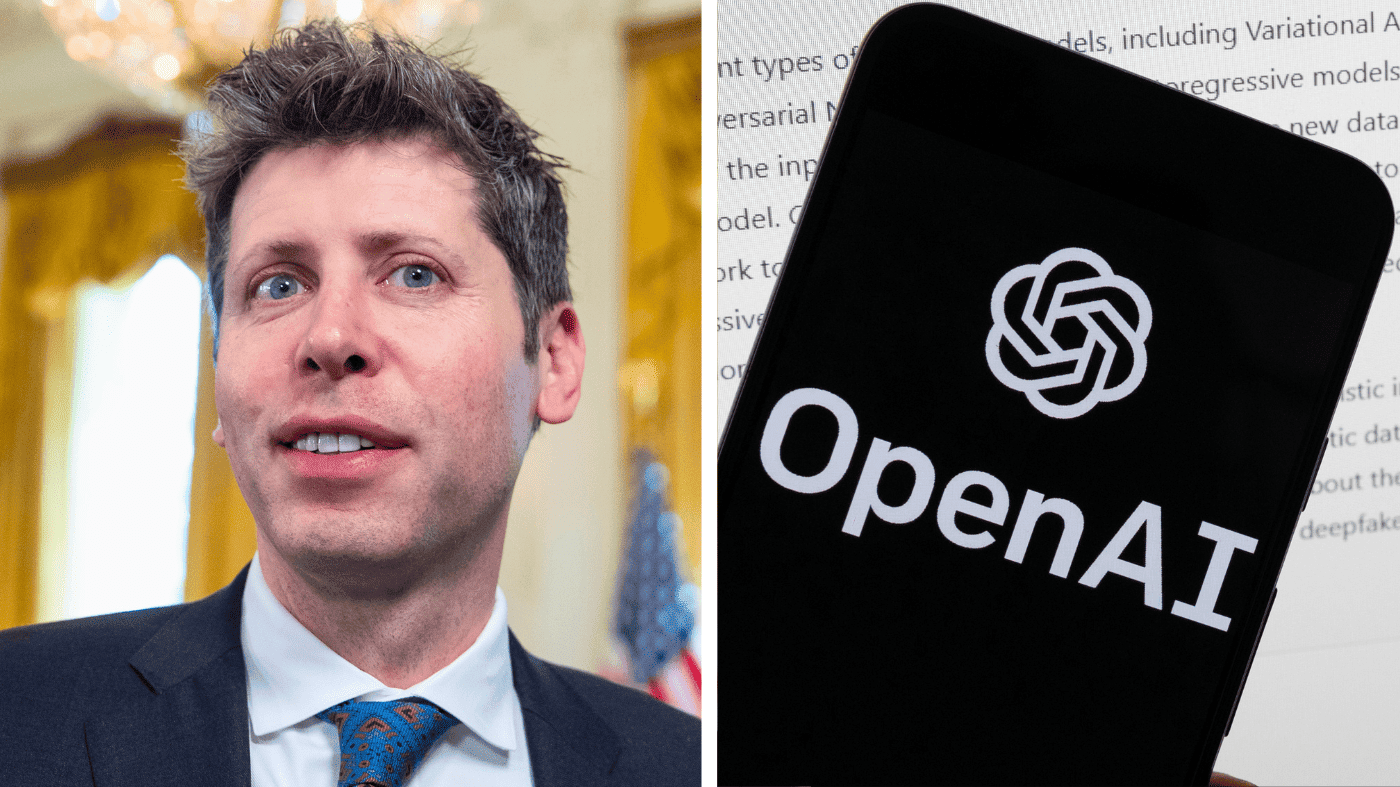

OpenAI chief executive Sam Altman has formally apologized to the community of Tumbler Ridge, British Columbia, after his company failed to report alarming messages posted on ChatGPT by a teenager who went on to kill eight people and wound more than two dozen others earlier this year.

In a letter addressed to the small mountain town, Altman expressed deep regret that OpenAI did not flag the account of 18-year-old Jesse Van Rootselaar to police after banning it last June—roughly seven months before the attack. The shooter killed her mother and younger brother before opening fire at a local secondary school, then died of a self-inflicted gunshot wound, authorities said.

“I am deeply sorry that we did not alert law enforcement to the account that was banned in June,” Altman wrote. “While I know words can never be enough, I believe an apology is necessary to recognize the harm and irreversible loss your community has suffered.”

Altman’s letter, shared publicly by British Columbia Premier David Eby on Friday, also offered condolences to the victims and their families. “No one should ever have to endure a tragedy like this,” the tech CEO said. “I cannot imagine anything worse in this world than losing a child.”

The incident has reignited debate over the responsibility of artificial intelligence companies to monitor and report potential threats. Critics argue that OpenAI, which has aggressively expanded its AI tools, should have acted more swiftly. The company has faced similar scrutiny in the past over content moderation gaps. For context, a recent shooting at a Louisiana mall also raised questions about how platforms handle warning signs.

Eby called the apology “necessary” but “grossly insufficient for the devastation done to the families of Tumbler Ridge.” In a separate post on X, he vowed to “continue to stand with Mayor Darryl Krakowa and the people of Tumbler Ridge in the difficult work ahead.”

Altman said OpenAI is now focused on strengthening partnerships with local officials “to help ensure something like this never happens again.” The company has not disclosed specific policy changes but has faced pressure from lawmakers and advocates to improve its threat detection mechanisms. This comes as other tech firms, including Novo Nordisk in its collaboration with OpenAI, navigate the ethical boundaries of AI use.

The tragedy has also sparked broader conversations about school safety and digital surveillance. Some experts argue that AI platforms need clearer protocols for escalating concerning behavior to authorities, while others warn against over-policing online speech. The case echoes debates over parental access to school curricula and the balance between transparency and privacy.

For Tumbler Ridge, a close-knit community of about 3,000 people, the path to healing remains uncertain. Altman’s apology, while a step, has done little to soothe the anger of those who lost loved ones. As Eby noted, words alone cannot undo the devastation.