The American financial system has established robust, expected protections for transactional data—safeguards that would trigger immediate regulatory action and public outcry if violated. Yet healthcare, which handles information more intimate than spending patterns, operates with weaker privacy standards despite artificial intelligence's accelerating integration into medical practice.

A Troubling Regulatory Disparity

Financial data reveals consumer habits and lifestyle choices, but medical records contain diagnoses, mental health histories, genetic risks, and medication profiles—information that defines personal identity. While federal agencies begin shaping AI governance frameworks, healthcare's privacy culture continues to lag behind banking's established norms. This gap persists despite insufficient urgency from both public and private sectors to address the imbalance.

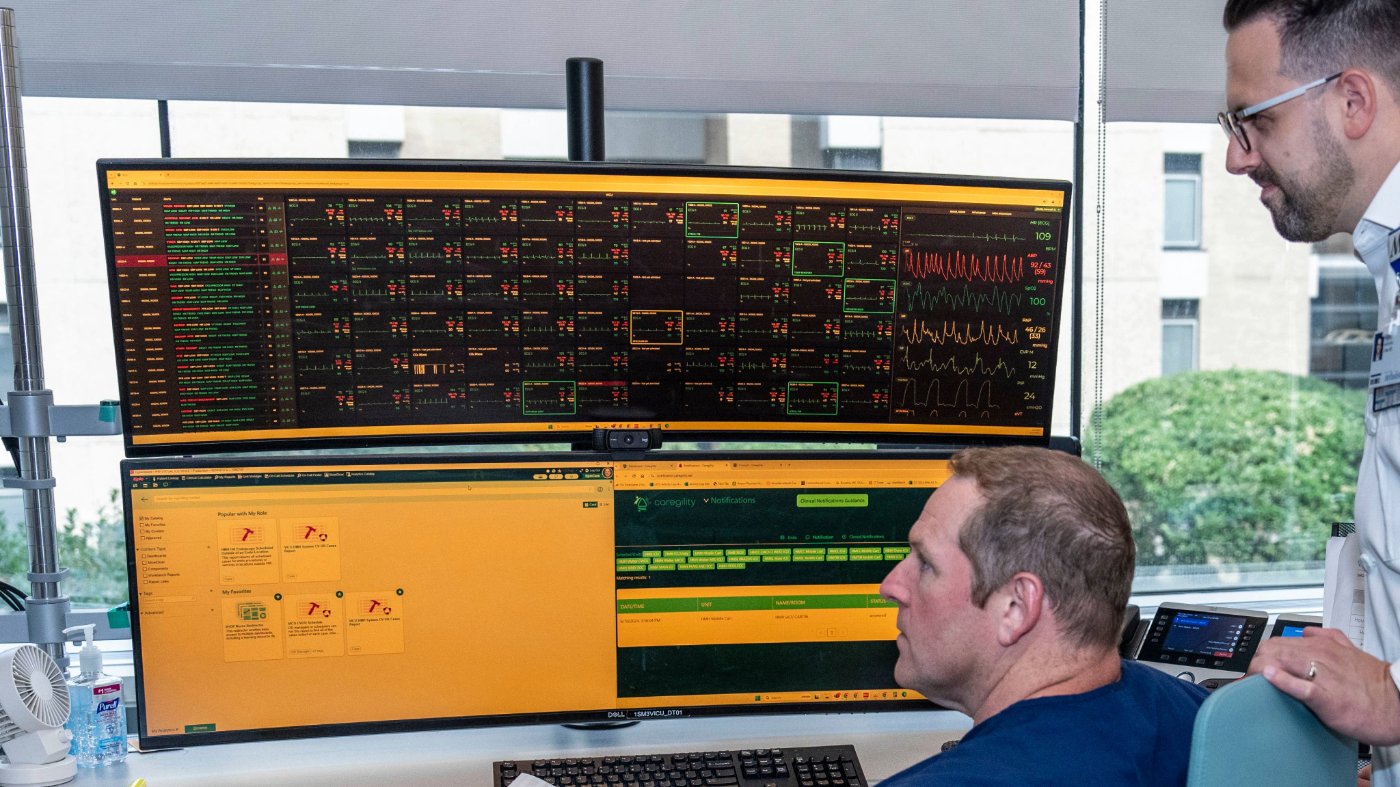

AI's expansion into clinical workflows, combined with continuous biometric data from wearables, has created unprecedented scale and speed in health data utilization. Sensitive information now flows across electronic health records, consumer platforms, and technology vendor networks. These tools promise improved diagnostics and personalized treatments but simultaneously create pathways for biased or flawed data to influence medical decisions, insurance coverage, and patient trust.

Three Critical Lessons from Financial Regulation

First, trust requires deliberate construction. Public confidence in modern banking emerged through coordinated standards, strong oversight, and early regulatory signals that made new technologies safe. When ATMs debuted, clear security and authentication rules normalized their adoption. Healthcare faces a similar inflection point: AI will only achieve scale if patients and physicians believe consistent safeguards govern its use, and if leaders demonstrate the will to implement those protections.

Second, privacy protections must match data sensitivity. Financial systems assume transactional information is inherently confidential. Medical data is more revealing, yet protections vary depending on storage location or collection platform. Unlike replaceable credit cards, medical information is permanently linked to individual identity—a distinction that amplifies risks as AI accelerates data use. These inconsistencies increase the danger that errors or biases will be magnified, potentially eroding confidence in how information is handled amid broader challenges like record specialist wait times that have escalated into a public health crisis.

Third, technological capability doesn't eliminate professional oversight. Digital banking improved convenience but didn't diminish governance, accountability, or expert supervision needs. In medicine, AI can support clinical judgment but cannot assume responsibility for patient outcomes. Physicians must remain central in evaluating tool deployment, data interpretation, and risk communication. Yet meaningful oversight requires AI developers to provide tools that track information sharing, monitor usage, and require consent before data trains algorithms—a need that becomes more urgent as corporate consolidation in healthcare raises systemic patient safety concerns.

The Path Forward: Building a Trustworthy Ecosystem

Healthcare is undergoing rapid transformation where patients increasingly trade personal health information for convenience and access, while simultaneously turning to general-purpose AI tools for medical guidance. The policy response remains uneven despite high stakes. The United States built a trusted financial system by treating accountability, standardization, and privacy as foundational elements, with leaders acting decisively when new risks emerged.

Creating a health data ecosystem deserving similar confidence requires equivalent resolve. What's needed is a national privacy and information accountability infrastructure covering AI developers and companies storing vast data quantities. Congress should pass legislation establishing common baseline protections for patients within traditional healthcare systems and when using consumer-facing digital health tools, apps, and external AI systems. This legislative action becomes particularly relevant as healthcare costs surpass the economy as the top voter concern in new national polling.

AI will undoubtedly shape medicine's future. Whether it strengthens or weakens patient-physician relationships depends not merely on technological capability, but on society's willingness to act now to establish the trust such transformation requires. The financial sector's example demonstrates that robust protections are achievable—but only through deliberate, coordinated effort across government and industry.